Compute with Hivenet

Fine-tune and train AI models with high-performance cloud GPUs

Scale your AI workflows with affordable, high-performance GPUs. Fine-tune Mistral, LLAMA, and more in minutes using our cloud-based compute. Access powerful Nvidia RTX 4090 and RTX 5090 for seamless AI training and inference.

Save up to 70% compared to major cloud providers

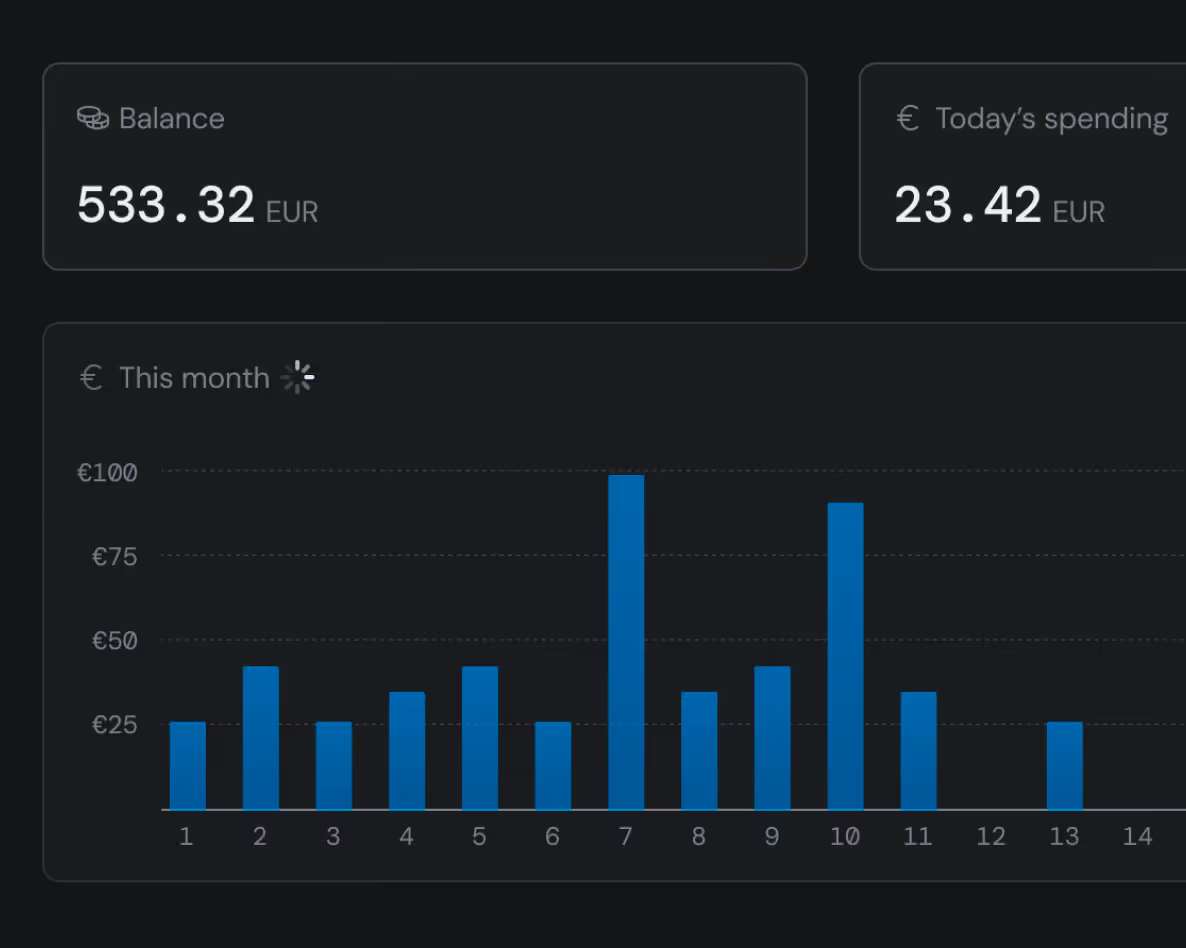

Pay only for what you use, down to the second.

No hidden fees, no long-term commitments.

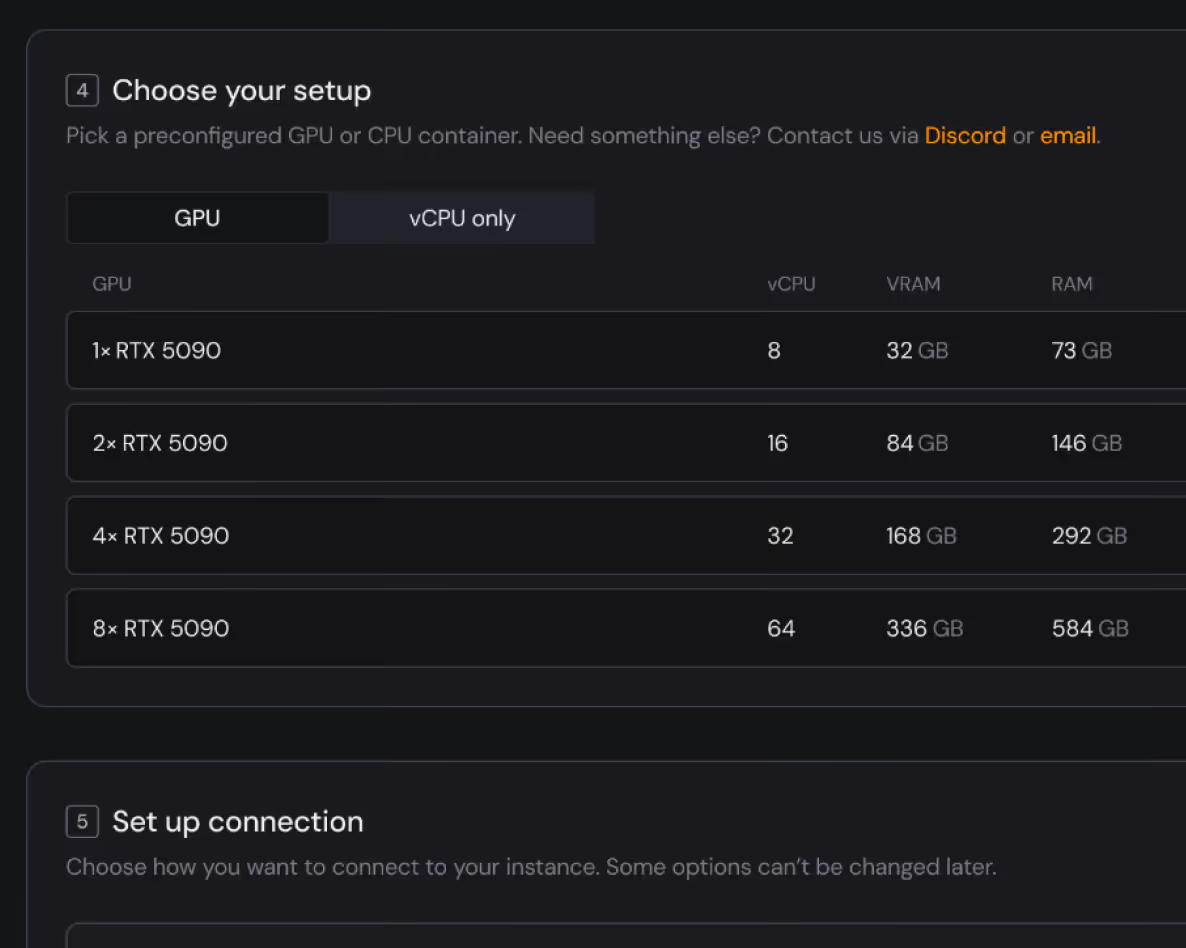

RTX 5090

1 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

2 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

4 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

8 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

RTX 4090

1 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

2 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

4 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

8 ×

vCPU -

RAM - GB

Disk Space - GB

Bandwidth - Mb/s

€-/h

Who runs on Compute with Hivenet

Researchers, startups, studios, and enterprise teams run production workloads on this infrastructure. Not a sandbox.

Compute has everything your workload needs

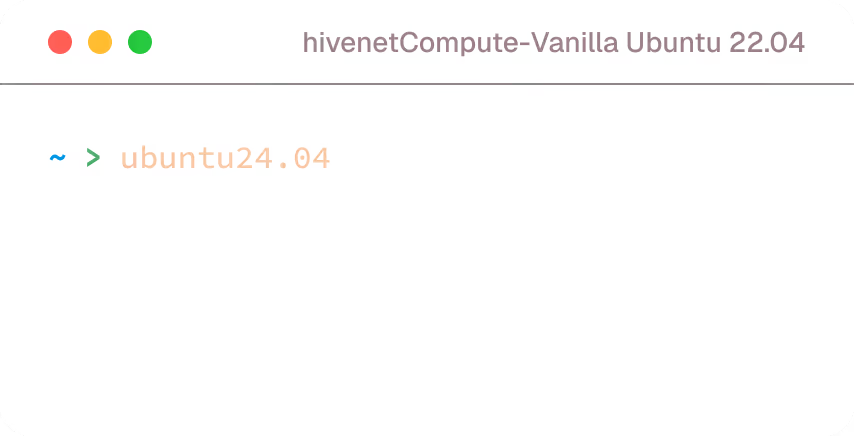

Get started in seconds

Start training your model immediately after you sign up

Preloaded with the right ML frameworks.

Root access, connect with SSH.

Quick and simple configuration.

High performance instances

Train your models on highly-provisioned instances

High ratio of vCPU, RAMs and SSD per GPU for each instance.

Up to 1 Gb/S internet connectivity per instance.

Affordable GPUs with per-second billing

No hidden costs. Just straightforward, competitive pricing

No ingress/egress costs.

No extra costs for RAM, vCPU, or storage.

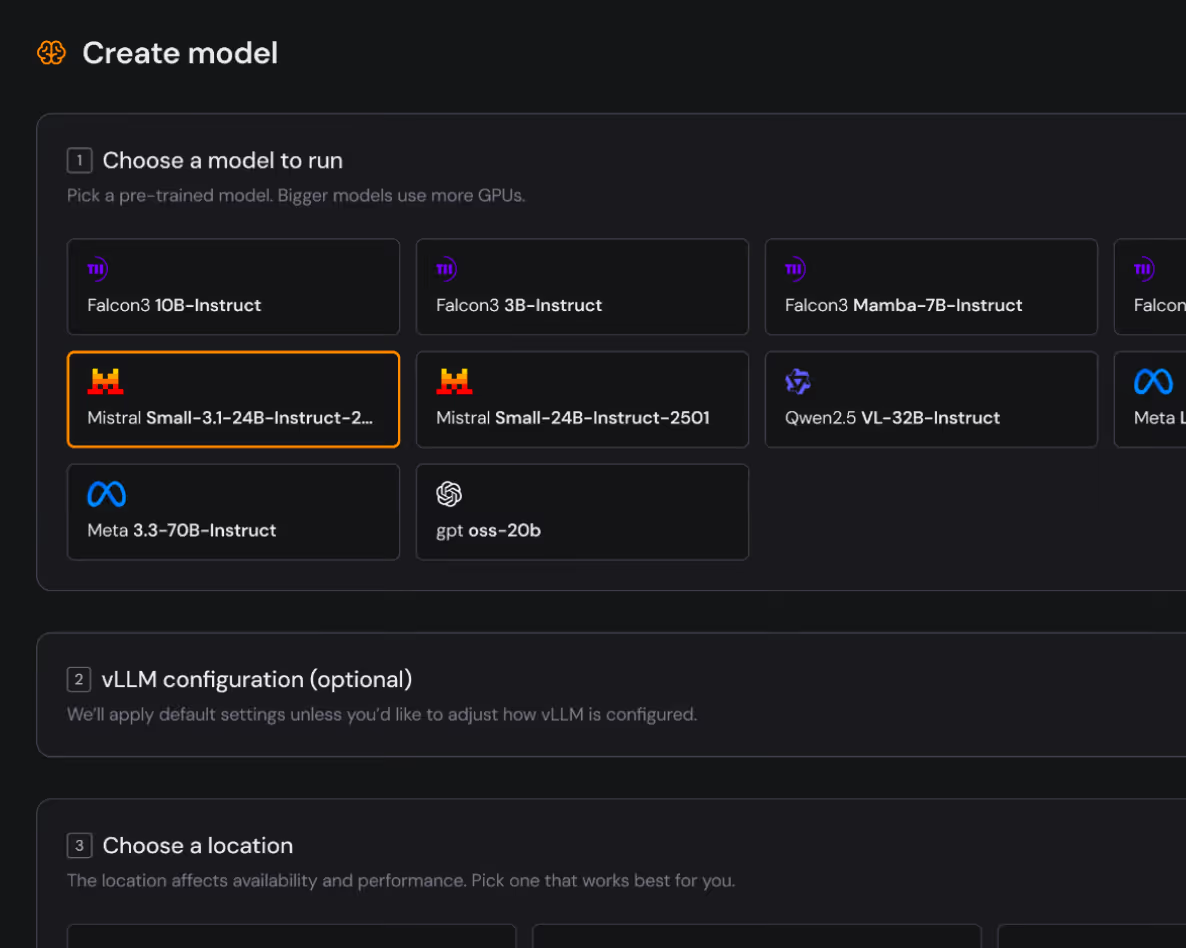

Managed inference with vLLM

Launch a vLLM server in a few clicks

Launch a vLLM server in a few clicks. Set context window and concurrency, stream tokens, and keep throughput high with continuous batching

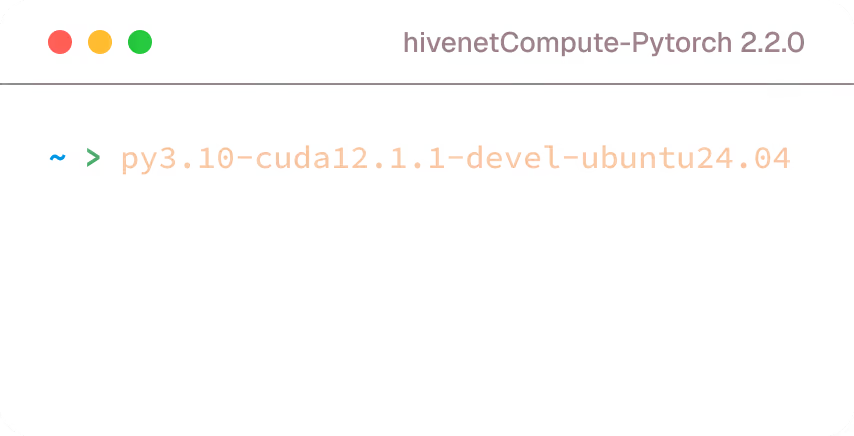

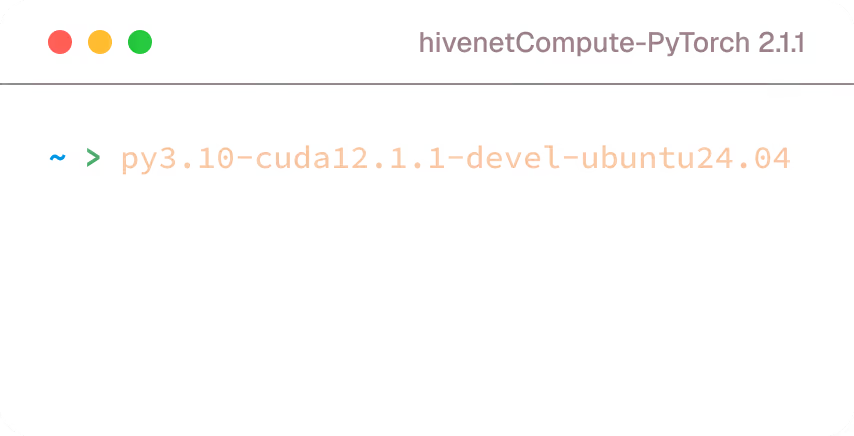

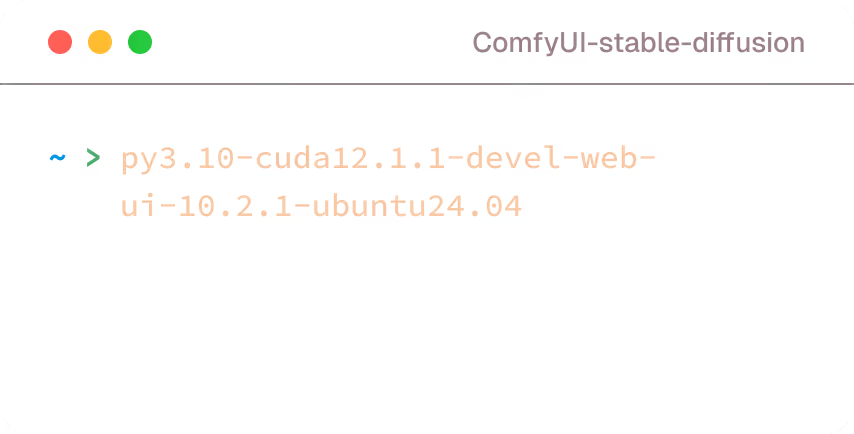

Run intensive workloads with confidence

Choose the right ML setup for your AI training needs

Best GPUs for AI inference.

Don’t miss this opportunity to scale your workflows with unmatched performance and savings.