Early access — pilot customers onboarding now.

Private AI on sovereign infrastructure.

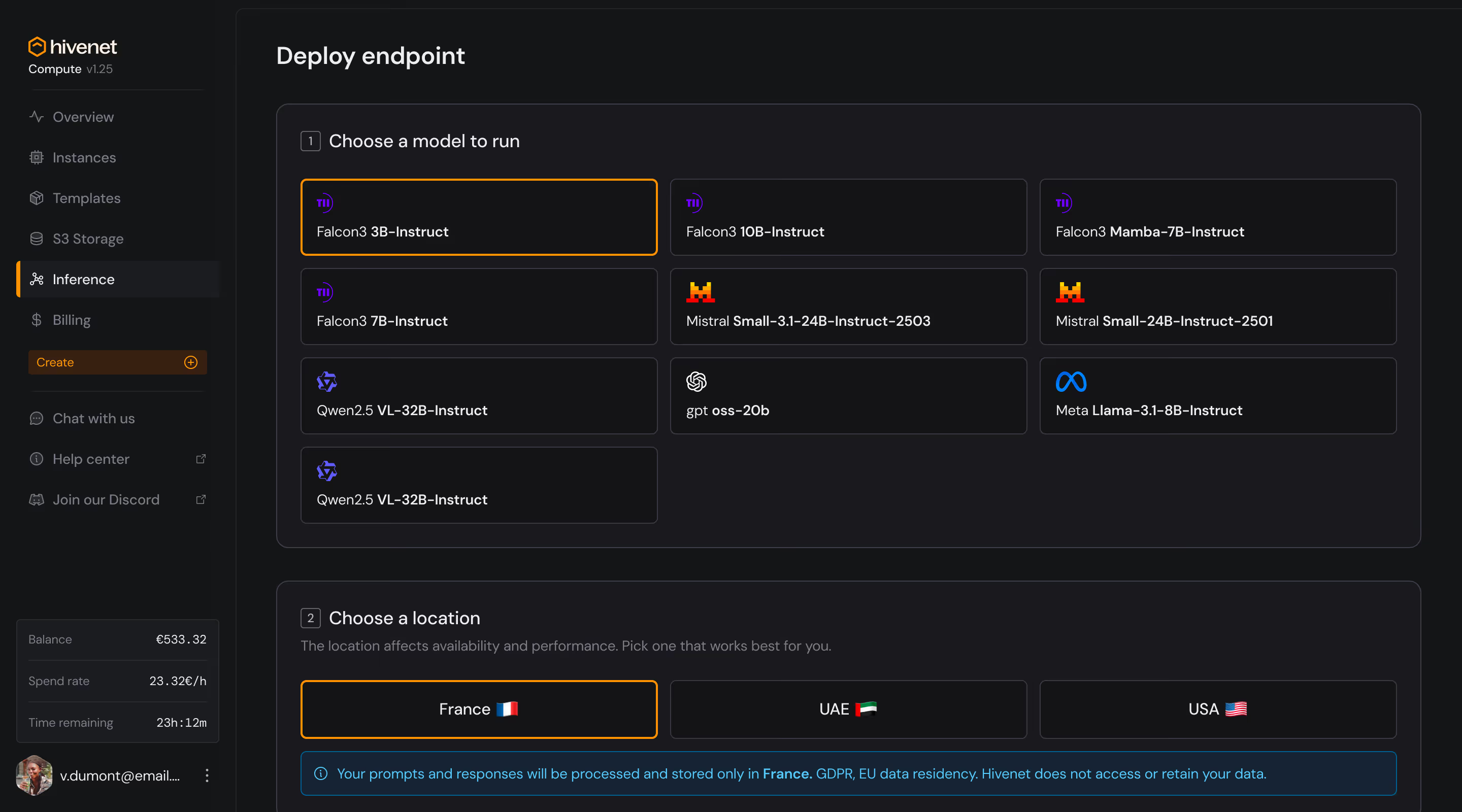

Run open-source LLMs on dedicated RTX 4090 and RTX 5090 GPUs. 45.4 ms TTFT. Your data never leaves your infrastructure. No shared tenancy. No third-party exposure. EU, UAE, and USA jurisdictions.

What Hivenet runs for you.

Deploy open-source language models on dedicated RTX 4090 or RTX 5090 GPUs. Per-second billing. Access controlled by cryptographic architecture — not policy.

Use cases include:

Internal assistants and knowledge retrieval on private data.

Document summarization for regulated industries.

Decision-support tools built on your internal data sets.

How we take you from proof-of-concept to production.

Model selection and optimization

We map your workload to the right open-source model and GPU configuration.

Data preparation

We help structure and secure your training or retrieval data on your infrastructure.

Application development

Chat interfaces, search, or custom AI tooling. Built on your stack.

Rollout

Controlled deployment with ongoing engineering support. Not a one-time handoff.

Pilot scope and timeline are defined at the technical consultation. If you know what you need, we can compress steps.

Your AI. Your data. Your jurisdiction.

Data access restricted by architecture — not by policy. Private Hivenet instance. No shared tenancy.

No US parent company. No CLOUD Act exposure. Your inference runs on EU, UAE, or USA infrastructure — your choice.

GDPR compliant. ISO 27001 certified infrastructure (via Policloud). SOC 2 in progress.

GPU compute pricing. No AI services markup.

RTX 5090

1 × - 8 ×

vCPU - - -

RAM - - - GB

Disk space - - - GB

Bandwidth - Mb/s

RTX 4090

1 × - 8 ×

vCPU - - -

RAM - - - GB

Disk space - - - GB

Bandwidth - Mb/s

Per-second billing. You pay for GPU time only — the AI services layer adds no markup.

Engineering support and migration assistance are included in the pilot. No consulting fees.