Who runs on Compute with Hivenet

Researchers, startups, studios, and enterprise teams run production workloads on this infrastructure. Not a sandbox.

Who uses Compute.

Used by independent builders, researchers, students, creators, and teams testing ideas before they become larger deployments.

Built for real AI and compute workflows

Inference

Run inference with managed vLLM. RTX 4090: 737 tokens/s at sustained load. RTX 5090: 45.4ms TTFT.

Training and fine-tuning

Train and fine-tune on 4090 and 5090 instances. Per-second billing. Reusable environments.

Your existing tools work.

Managed vLLM

Serve open-source models without building the full serving layer. Endpoint-compatible with OpenAI-format clients.

Ready templates

Ubuntu, PyTorch, and Jupyter Notebook. Pre-configured. Start in under 60 seconds.

Custom templates

Save your environment. Reuse it on any future instance.

Run where your data must live.

GPU instances available in France, UAE, and USA. Each region is sovereign by architecture — no cross-border data movement, no shared tenancy with other regions. Choose your region at launch.

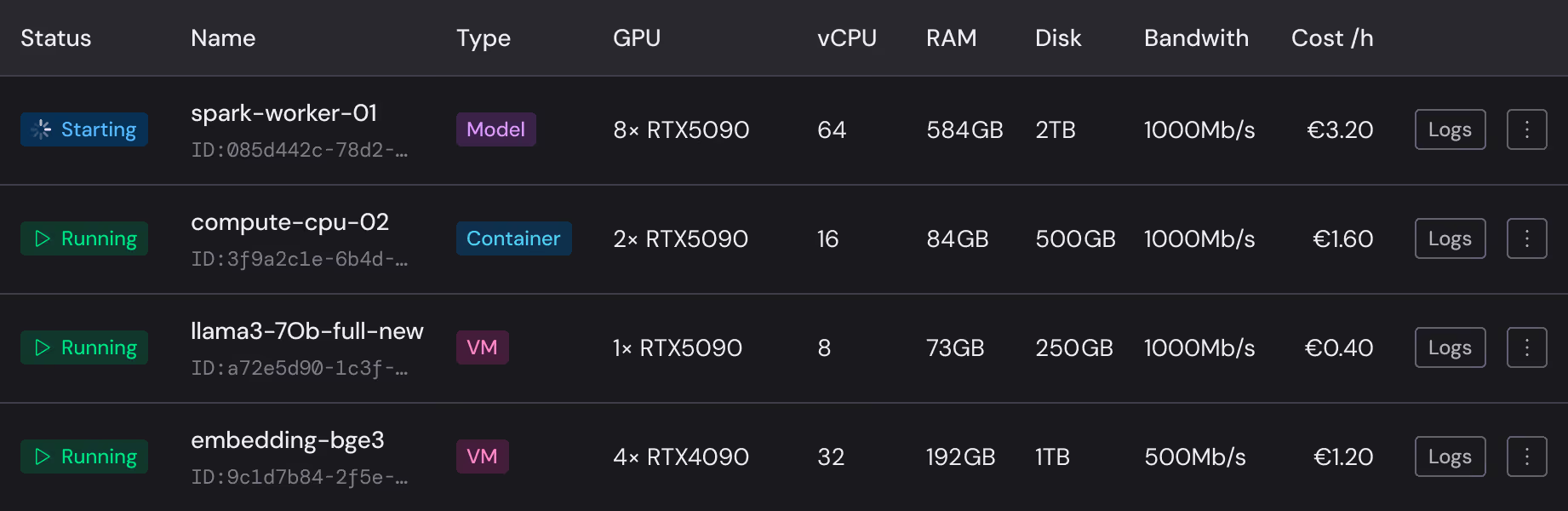

Specs and pricing

RTX 5090

1 × - 8 ×

vCPU - - -

RAM - - - GB

Disk space - - - GB

Bandwidth - Mb/s

RTX 4090

1 × - 8 ×

vCPU - - -

RAM - - - GB

Disk space - - - GB

Bandwidth - Mb/s

vCPU

2 × - 32 ×

RAM - - - GB

Disk space - - - GB

Bandwidth - - - Mb/s

Per-second billing. No egress fees. Storage included.

Start self-serve, go deeper when needed

No sales call required for self-serve instances. Docs cover setup, templates, and API references.

Built on distributed infrastructure — sovereign by architecture.

No single data center. No hyperscaler dependency. Cryptographic sharding means no legal pathway for unauthorized data access — not a policy, an architectural guarantee.

Ready to get started?

Try Compute

Launch a GPU instance now. Self-serve. Per-second billing from the first second.

Try Compute