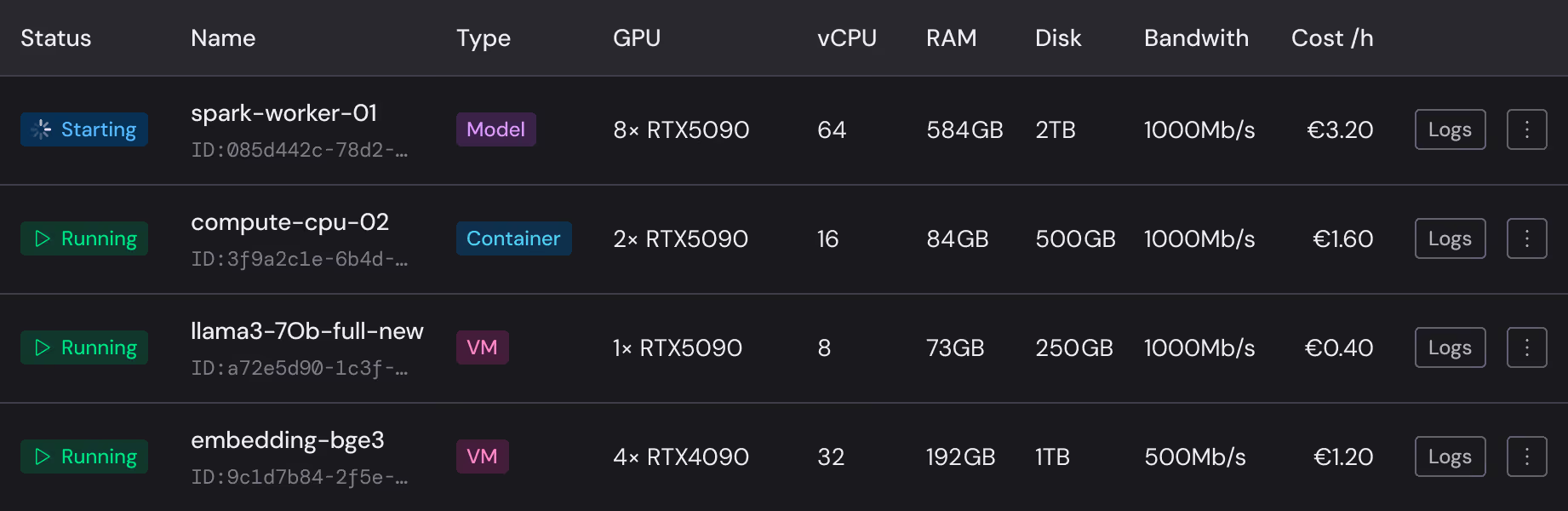

Compute with Hivenet

RTX 4090 from €-/h. RTX 5090 from €-/h. Per-second billing. Network traffic included.

Self-serve GPU compute. Sovereign by architecture. Available in France, UAE, and USA.

Who runs on Compute with Hivenet

Researchers, startups, studios, and enterprise teams run production workloads on this infrastructure. Not a sandbox.

Who uses Compute.

Used by independent builders, researchers, students, creators, and teams testing ideas before they become larger deployments.

What you can do with it.

Training and fine-tuning

Train on 4090 and 5090 instances. Reusable environments. Per-second billing. Your training data stays on your infrastructure.

Rendering and creative work

Blender, video encoding, upscaling. Dedicated GPU instances. Not queued. Not shared.

Research and experiments

Simulations and notebooks. On-demand. No minimum commitment.

Inference

Run local models at 737 tokens/s (RTX 4090) or 45.4ms TTFT (RTX 5090). Private. No third-party API calls.

Get started fast.

Managed vLLM

Deploy an OpenAI-compatible inference endpoint without building the serving layer yourself.

Ready templates

Ubuntu, PyTorch, and Jupyter Notebook. Pre-configured. Start in under 60 seconds.

Custom templates

Save your setup. Reuse it.

Currently on RunPod or Google Colab?

Bring your setup. Same tools. European infrastructure. Lower cost.

Per-second billing. No egress fees. Storage included.

RTX 5090

1 × - 8 ×

vCPU - - -

RAM - - - GB

Disk space - - - GB

Bandwidth - Mb/s

RTX 4090

1 × - 8 ×

vCPU - - -

RAM - - - GB

Disk space - - - GB

Bandwidth - Mb/s

vCPU

2 × - 32 ×

RAM - - - GB

Disk space - - - GB

Bandwidth - - - Mb/s

Start now. No approval process.

No sales call required. Credit card is all you need.

Your GPU instances run on distributed infrastructure

No legal pathway for foreign government data requests.

77% greener than centralized cloud.

No offsets.

Need Compute for a team?

If you need regional deployment, private AI, procurement support, or a larger rollout, explore Compute for business.